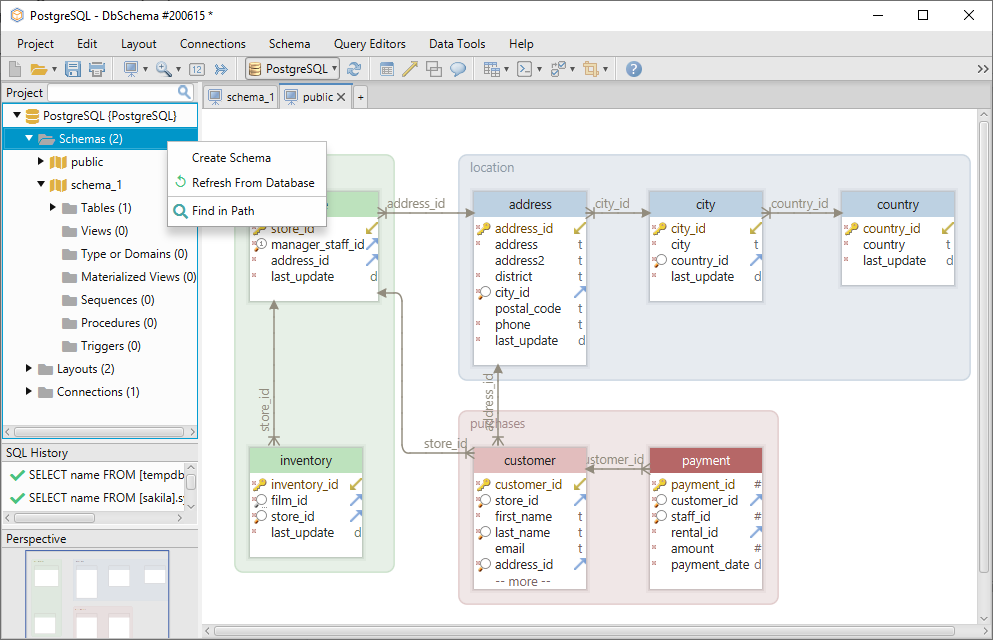

The schema defines the structure of the graph, including the types of nodes and edges that will be used to represent the entities and relationships. Once the relevant entities and relationships have been identified, they can be organized into a schema for the knowledge graph. Automated methods use natural language processing (NLP) techniques to extract the entities and relationships from the ontology documentation. Manual inspection involves reading the ontology documentation and identifying the relevant entities and relationships. The ontology can be studied using various techniques including manual inspection and automated methods. Insurance risk ontology defines the concepts and relationships that are relevant to the insurance domain, such as policy, risk and premium. An ontology is a formal representation of the knowledge in a domain, including the concepts, relations and constraints that define the domain. The first step in generating a knowledge graph is to study the relevant ontology and identify the entities and relationships that are relevant to the domain. Approach Step 1: Studying the ontology and identifying entities and relations Finally, it provides a way to integrate data from different sources, including structured and unstructured data.īelow is a 4 step approach. Second, it provides a way to represent knowledge in a structured and semantically rich format, which makes it easier to analyze and interpret. First, it provides a way to organize and access data that is easy to query and update. There are several benefits to using a knowledge graph in the insurance industry. This can include nodes, edges and properties where nodes represent entities, edges represent relationships between entities and properties represent at-tributes of entities and relationships. Knowledge graphs provide a way to organize and access this data in a structured and semantically rich format. The insurance industry is faced with many challenges, including the need to manage large amounts of data in a way that is both efficient and effective. Below we provide an example of an insurance company, but the approach is universal. The approach outlined here combines ontology-driven and natural language-driven techniques to build a knowledge graph that can be easily queried and updated without extensive engineering efforts to build bespoke software. Combining ontology and natural language techniques The creation and search queries can be customized to the platform in which the graph is stored - such as Neo4j, AWS Neptune or Azure Cosmos DB. You may also need to clear your cache.The second step is to use an LLM as an intermediate layer to take natural language text inputs and create queries on the graph to return knowledge. Tool not working? Make sure that you have completed all required fields correctly. And once you’ve filled out all the info and hit “Submit” you’ll have the option to run a quick test of your markup by clicking “Test Your Schema”.

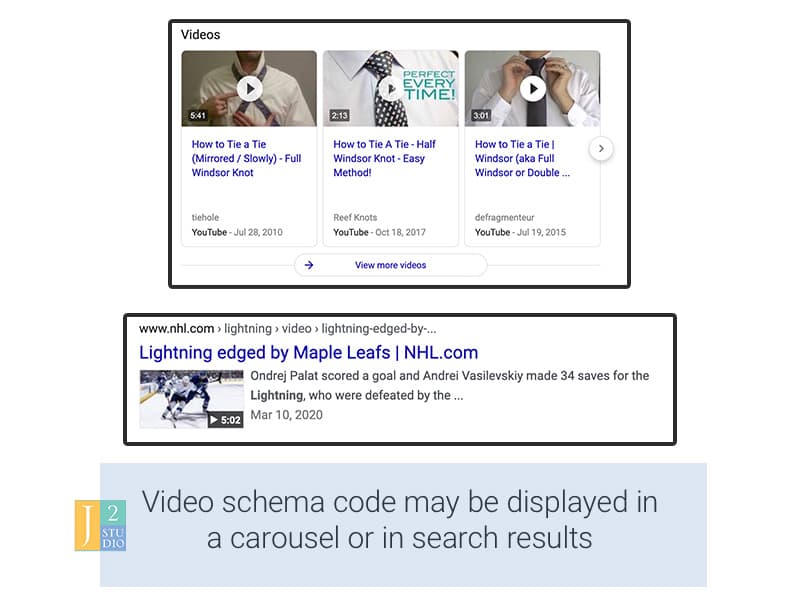

This tool is a great time saver for any SEO agency with numerous client sites to optimize. This will allow you to generate additional schema markup for events, products, actions, creative works and much more. If you want to really dial in your JSON LD, click the blue “All Schema JSON LD” button. It’s a bit more work than the verified listing tool, but you still won’t have to manually write your markup! Here you’ll have to select the type of schema you want to generate and fill the missing fields. If you DO NOT have a verified GMB listing, click the red “Local Markup JSON LD” button to get to the next step. You’ll have the advantage of the tool pulling a lot of your info from the listing itself, but you’ll want to fill out as much detail as you can on this simple form. Click the green “Verified GMB w/ Address” button above to reach step two. This tool is the easiest to use for sites that have a verified GMB listing.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed